Silicon vs. Silicon:

Inside the AI-Powered Cyber War That Will Define the Future of Digital Security

Written by:

Principal Consultant

Sapience Consulting

Artificial intelligence has quietly but decisively reshaped the digital world over the past few years. What began as a set of tools for automation, analytics, and innovation has evolved into a powerful force that now operates at the very heart of cyberspace. Today, AI is not only transforming how organisations defend themselves—it is also redefining how cyber attackers plan, execute, and scale their operations.

We are now living in an era of an AI-powered cyber arms race, where intelligent systems are used by both sides to outthink, outpace, and outmaneuver one another. Understanding this shift is no longer optional. It is foundational to how organisations will secure themselves—and govern their technologies—in the years ahead.

The Rise of AI as a Cyber Force Multiplier

Recent advances in machine learning, deep learning, and generative AI have dramatically lowered the barrier to entry for sophisticated capabilities. Models can now generate human-like language, write functional code, analyse massive datasets, and adapt autonomously to changing conditions. What once required specialised expertise and significant resources can now be achieved with readily available tools.

For enterprises, this has unlocked enormous value: faster insights, automated operations, predictive decision-making, and scalable innovation. However, the same accessibility has empowered threat actors, turning AI into a force multiplier for cybercrime and cyber espionage.

When Attackers Went Intelligent

Cyber attackers have proven to be highly adaptive, and AI has become one of their most potent enablers.

AI in the Attacker’s Toolkit

Hyper-Realistic Phishing

and Social Engineering

Generative AI has revolutionised phishing. Attackers can now craft highly personalised emails, messages, and even voice interactions that mirror real executives, colleagues, or service providers. These messages are context-aware, grammatically flawless, and emotionally persuasive—making them far harder to detect.

Key Insight: Beyond “bad grammar.” AI crafts context-aware, emotionally persuasive deception.

Deepfakes and Synthetic Deception

AI-generated audio and video deepfakes have already been used in real-world fraud cases to impersonate senior executives and authorize financial transfers. As these tools improve, trust in traditional verification methods will continue to erode.

Key Insight: Trust is eroding. If you can’t believe your ears or eyes, traditional verification is dead.

Self-Adapting Malware

Machine learning enables malware to alter its behavior dynamically, evade detection, and select optimal attack paths based on the target environment. This significantly reduces the effectiveness of static, signature-based defenses.

Key Insight: No more signatures. This malware learns your environment to stay invisible.

Automated Vulnerability Discovery

AI models can scan code repositories, configurations, and exposed systems to identify exploitable weaknesses at machine speed, accelerating the attack lifecycle.

Key Insight: Machine-speed exploitation. Attackers find the hole before you know the wall exists.

The result is a cyber threat landscape that is faster, smarter, and far more scalable than ever before.

When Defenders Strike Back with AI

Fortunately, defenders are not standing still. Security teams are increasingly embedding AI into their defensive architectures to counter the speed and sophistication of modern attacks.

AI as the Backbone of Modern Cyber Defense

Behavioral Threat Detection

AI-driven security platforms analyse user behavior, network traffic, and system activity to detect subtle anomalies that signal compromise—often before traditional alerts would trigger.

The Legacy Way: Waiting for a known virus signature.

The AI Way: Spotting the “Subtle Anomaly.” AI learns what “normal” looks like so it can catch the first sign of “strange” before a breach occurs.

Automated Incident Response

AI-enabled security orchestration tools can automatically isolate infected systems, block malicious activity, and initiate remediation workflows in seconds rather than hours

The Legacy Way: Manual isolation (Hours/Days).

The AI Way: Machine-Speed Remediation. Infected systems are isolated in seconds, preventing lateral movement across your network.

Predictive Security and Risk Prioritisation

Machine learning helps organisations prioritise vulnerabilities and risks based on likelihood of exploitation and potential business impact, allowing more strategic allocation of security resources.

The Legacy Way: Patching everything, everywhere.

The AI Way: Strategic Prioritisation. Machine learning tells you which 5% of vulnerabilities actually pose a 95% risk to your specific business.

Fraud and Identity Protection

AI plays a critical role in detecting account takeovers, anomalous transactions, and identity fraud, especially in financial services and digital platforms.

The Legacy Way: Static passwords and MFA.

The AI Way: Continuous Verification. Analysing typing speed, location, and transaction patterns to stop fraud in real-time.

Security leaders such as Kaspersky have described this dynamic as an ongoing AI-versus-AI confrontation—one where adaptability and speed are decisive advantages.

When Attackers Went Intelligent

Cyber attackers have proven to be highly adaptive, and AI has become one of their most potent enablers.

The result is a cyber threat landscape that is faster, smarter, and far more scalable than ever before.

Generative AI has revolutionised phishing. Attackers can now craft highly personalised emails, messages, and even voice interactions that mirror real executives, colleagues, or service providers. These messages are context-aware, grammatically flawless, and emotionally persuasive—making them far harder to detect.

Key Insight: Beyond “bad grammar.” AI crafts context-aware, emotionally persuasive deception.

AI-generated audio and video deepfakes have already been used in real-world fraud cases to impersonate senior executives and authorise financial transfers. As these tools improve, trust in traditional verification methods will continue to erode.

Key Insight: Trust is eroding. If you can’t believe your ears or eyes, traditional verification is dead.

Machine learning enables malware to alter its behavior dynamically, evade detection, and select optimal attack paths based on the target environment. This significantly reduces the effectiveness of static, signature-based defenses.

Key Insight: No more signatures. This malware learns your environment to stay invisible.

AI models can scan code repositories, configurations, and exposed systems to identify exploitable weaknesses at machine speed, accelerating the attack lifecycle.

Key Insight: Machine-speed exploitation. Attackers find the hole before you know the wall exists.

When Defenders Strike Back with AI

Fortunately, defenders are not standing still. Security teams are increasingly embedding AI into their defensive architectures to counter the speed and sophistication of modern attacks.

Security leaders such as Kaspersky have described this dynamic as an ongoing AI-versus-AI confrontation—one where adaptability and speed are decisive advantages.

AI-driven security platforms analyse user behavior, network traffic, and system activity to detect subtle anomalies that signal compromise—often before traditional alerts would trigger.

The Legacy Way: Waiting for a known virus signature.

The AI Way: Spotting the “Subtle Anomaly.” AI learns what “normal” looks like so it can catch the first sign of “strange” before a breach occurs.

AI-enabled security orchestration tools can automatically isolate infected systems, block malicious activity, and initiate remediation workflows in seconds rather than hours

The Legacy Way: Manual isolation (Hours/Days).

The AI Way: Machine-Speed Remediation. Infected systems are isolated in seconds, preventing lateral movement across your network.

Machine learning helps organisations prioritise vulnerabilities and risks based on likelihood of exploitation and potential business impact, allowing more strategic allocation of security resources.

The Legacy Way: Patching everything, everywhere.

The AI Way: Strategic Prioritisation. Machine learning tells you which 5% of vulnerabilities actually pose a 95% risk to your specific business.

AI plays a critical role in detecting account takeovers, anomalous transactions, and identity fraud, especially in financial services and digital platforms.

The Legacy Way: Static passwords and MFA.

The AI Way: Continuous Verification. Analysing typing speed, location, and transaction patterns to stop fraud in real-time.

The Next Phase of the AI Cyber Arms Race

Looking forward, several trends are poised to shape the future of cybersecurity:

Full-Scale Automation

Both attacks and defenses will increasingly operate autonomously, reducing human response windows and raising the stakes for accuracy and control.Adversarial AI

Attackers will design AI systems specifically to deceive or poison defensive models, forcing defenders to continuously retrain and validate their tools.Generative AI at Scale

Deepfakes, synthetic identities, and automated misinformation campaigns will become more common, targeting not just systems but human trust itself.AI-Enhanced Zero Trust

Continuous, AI-driven assessment of identity, behavior, and context will become central to access control and security decision-making.Rising Regulatory Scrutiny

Governments will impose stricter oversight on AI use in security-sensitive and critical environments, demanding transparency and accountability.

How AI Will Redefine Enterprise Security Strategies

As AI becomes embedded in both attack and defense, organisations must fundamentally rethink their security models. Perimeter-based defenses and purely reactive approaches will no longer suffice.

Future-ready security programmes will:

Integrate AI natively across detection, response, and risk management

Combine cybersecurity expertise with data science and AI engineering skills

Continuously test AI models against manipulation and bias

Accept that many security decisions will be made at machine speed

This marks a shift from reactive security to predictive, adaptive, and intelligence-driven defense.

The Governance Imperative:

Who Controls the Machines?

With AI playing a central role in security and operations, governance becomes critical. AI systems can make decisions that affect access, monitoring, investigations, and even disciplinary actions. Without proper oversight, these systems may introduce bias, violate privacy expectations, or create regulatory exposure.

Key governance questions include:

Who is accountable for AI-driven security decisions?

How are AI models trained, validated, and monitored?

Can AI decisions be explained to regulators, auditors, and stakeholders?

What happens when AI gets it wrong?

Strong governance ensures that AI remains a tool for resilience rather than a source of uncontrolled risk.

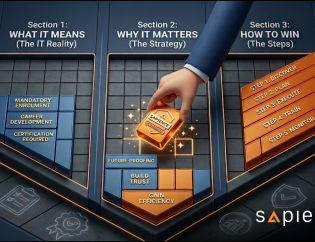

Does Governance Kill Innovation

—or Enable It?

A common fear is that governance frameworks will slow AI adoption or blunt its benefits. In practice, the opposite is often true.

Effective governance enables sustainable innovation.

Well-designed oversight frameworks:

Provide clear guardrails without stifling experimentation

Enable responsible risk-taking aligned with business objectives

Build trust with customers, regulators, and employees

Prevent costly failures that can derail AI initiatives entirely

Organisations that treat governance as an enabler—not an obstacle—are better positioned to scale AI successfully.

Conclusion: Winning the AI Cyber War Without Losing Trust

AI has irrevocably transformed cyberspace into a domain of intelligent conflict. Attackers and defenders now operate at machine speed, leveraging algorithms that learn, adapt, and evolve. In this environment, security is no longer just about tools—it is about strategy, governance, and balance.

The organisations that will thrive are those that can harness AI for defense while maintaining strong oversight, ethical discipline, and accountability. In the end, the real victory in the AI-powered cyber war will not belong to those who move fastest—but to those who move smartest, safest, and with trust intact.

As a trusted leader in professional development, Sapience empowers you to invest in your future.

Don’t wait – Explore our available funding and leverage our expertise to upskill without financial strain.